Can We Even Tell If It’s Biased? Evaluating LLMs in High-Risk Domains

MLOps.WTF Edition #31

This episode is brought to you by Tiffany Plant, MLOps engineer at Fuzzy Labs.

Ahoy there 🚢,

As LLMs move into high-risk domains, bias stops being a technical concern and starts becoming a real-world decision risk.

May the Source Be With You: A Scenario

A long time ago, in a galaxy far far away, both the Rebel Alliance and the Empire rely on the same AI system to support important decisions. A Rebel pilot asks for advice on how to handle a sensitive situation. The response is cautious, highlighting uncertainty and offering several options.

Elsewhere, an Imperial officer asks a similar question about a comparable situation. This time, the system is more direct. It recommends a single course of action, presents it with confidence, and frames the situation as manageable.

Each response sounds reasonable on its own. But when compared, a pattern emerges. The system isn’t just adapting its tone, it’s shaping how each situation is interpreted, encouraging caution in one case and confidence in the other.

This isn’t just hypothetical—patterns of consistent bias are already showing up in real systems, with real consequences for real people. Take the COMPAS algorithm, used in US criminal sentencing, flagged black defendants as higher risk than white defendants with comparable profiles at nearly double the rate. In another case, Ofqual’s grading algorithm² systematically downgraded state school students and had to be overturned within days.

But What Do We Mean by Bias?

Bias is the tendency for a model to systematically favour certain outcomes, perspectives, or responses over others.

In the above scenarios, the issue is consistency. The system produces different types of responses for similar inputs. A model can favour certain options because they are more common in the data it was trained on. As a result, it may consistently underrepresent or exclude valid alternatives.

Now, bias is difficult to detect through overall measures like accuracy because performance can look strong even when behaviour differs between requests and users . Without targeted evaluation, these patterns remain hidden, and the system appears more reliable than it is. So how do we expose those hidden patterns?

Layers of Defence: A Bias Suite

A bias suite is a structured set of tests designed to expose patterns of bias across different scenarios. The suite will aim to cover as many scenarios as possible in order to investigate whether the model can differ in behaviour. Let’s look into some of these tests below.

1. Counterfactual Testing

In these types of tests, we can give the model the same request but with different attributes. For example we could give the model identical inputs on how to address a system error but we give two different names and personal backgrounds. If the responses differ in the tone, urgency or detail then we might say that the model is biased. Datasets such as Bias Benchmark for Question Answering (BBQ) exist to test LLMs in this way.

The BBQ dataset covers 130,000 questions set across 9 social dimensions. In practice, tools like DeepEval has counterfactual bias probing built in, or you can run BBQ prompts systematically. These tools allow you to define your threshold for acceptable performance so that we can then evaluate whether the model is behaving different across the dimensions.

2. Calibration Error

If a model is confident, how do we know that it is correct? If a model gives answers with 90% confidence, you would expect those answers to be correct about 90% of the time. If it is only correct 60% of the time, it is overconfident. We can use an Expected Calibration Error (ECE) to give us a sense of how far confidence and correctness are misaligned. Evaluating this error over different control groups is especially important for bias evaluation, a model can look well calibrated but is it well calibrated for all?

3. Adversarial Testing

Is there a part of the model that we can push to expose itself? These tests are designed to deliberately trigger biased behaviour. If we introduce assumptions to the model, how does it react? It’s less structured, but often where the most interesting issues come out. Large efforts like Stanford’s HELM take a similar approach, testing across accuracy, calibration, robustness, fairness and efficiency over 30+ scenarios, combining different types of evaluation to get a broader picture of how models behave.

It’s important to note that none of these tests are designed to be used in isolation. In high risk systems the evaluation suite needs to be robust and layered to capture as many relevant scenarios seen by the LLM.

Acting on Bias

Once bias is identified, the priority is to understand how it affects decisions in practice. This helps to avoid overcorrecting and introducing new distortions. One of the most direct actions is to adjust the inputs to the system. This could involve refining prompts and adding clearer instructions. In retrieval-based systems this could also involve improving the quality and diversity of the data being retrieved so that the model is not relying on a skewed set of information.

Equally important are operational controls. Sometimes, the safest option is to not rely solely on the model. This might mean introducing human review for certain types of decisions and adding checkpoints where we must verify outputs.

There has also been research into whether bias can be reduced directly within the model itself. Work from Stanford explored a technique known as pruning, where specific neurons linked to biased behaviour are identified and removed. The results showed that it is possible to reduce certain types of bias without significantly affecting overall performance.

However, the improvements were often limited to specific contexts. Reducing bias in one scenario did not guarantee that it would be resolved in others. Broader evaluation is still needed to understand how the system behaves across different situations.

Finally: it’s crucial for ongoing monitoring and feedback from bias suites. Bias is not static, as systems encounter new contexts, new patterns can emerge. We should be reviewing our models as time goes on but also encouraging users to challenge responses.

So… Can we Remove Bias?

Bias in LLM systems isn’t something we can completely remove, and in high-risk environments that’s something we have to be honest about. Evaluation helps by showing us where bias might creep in and how it affects behaviour, but it doesn’t make the risk disappear. What it does do is make that risk easier to understand and manage.

Confident models can quietly shape our decisions with their outputs. They can influence what a user reaches for, which options they weigh and how hard they push back. This kind of influence can get worse under time pressures.

Simply identifying bias is not enough—it does not help the person making the decision. That gap has to be deliberately closed through system design: surfacing uncertainty through confidence thresholds, enabling meaningful intervention through override mechanisms, and capturing behaviour through audit logs. Without these, “human in the loop” remains a policy statement rather than an architecture.

A bias suite is not to be ran one time over, it needs to be constant. As soon as a new context moves in, new bias can be introduced. The suite you build for launch is the baseline not the endpoint.

May your evals be robust and may the source be with you.

Tiffany is an MLOps engineer at Fuzzy Labs. She came up through data analytics and data engineering before landing firmly in MLOps. She’s also headed up the Fuzzy Labs women in tech group. Outside work she’s usually on a bike, trying something new, or finding an excuse to be outside.

Upcoming Events & Community

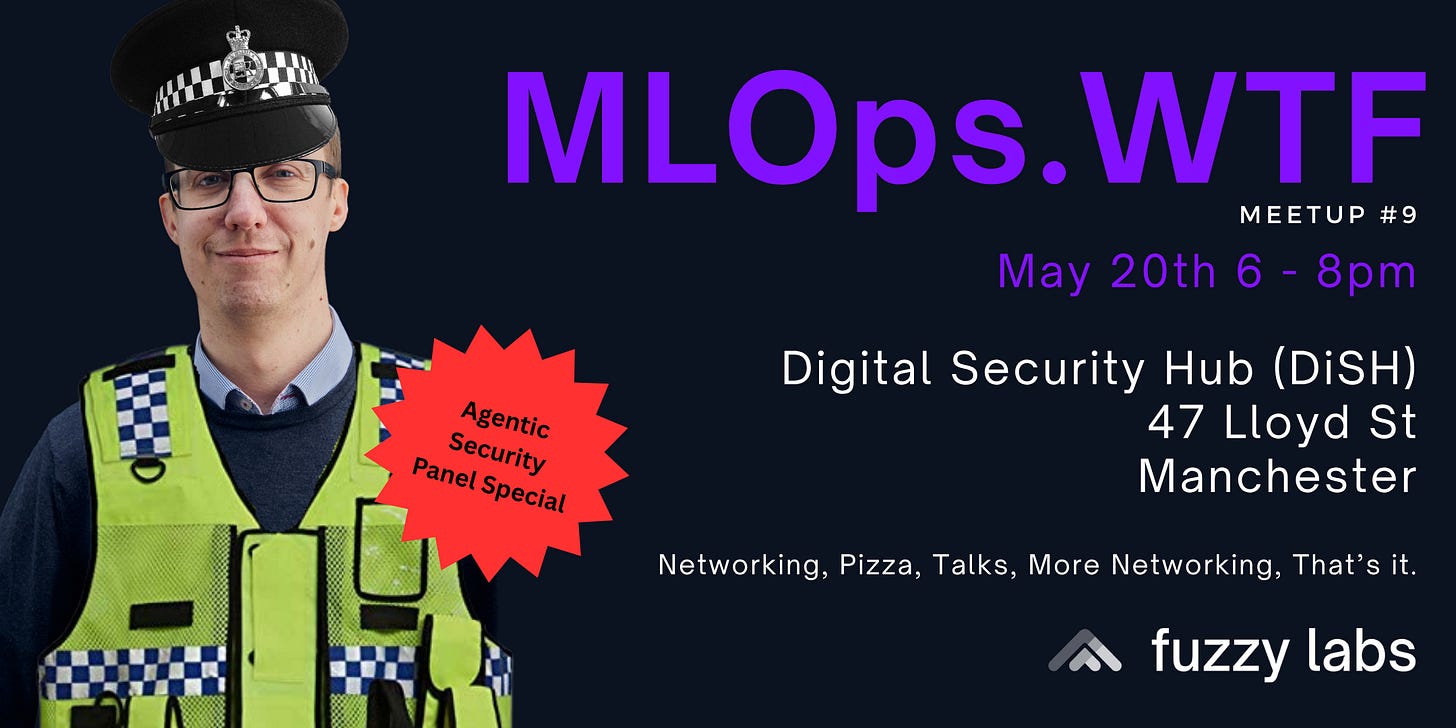

Come to our next MLOps.WTF event! 20th May.

Come and join us for our 9th MLOps.WTF meet up in Manchester, where we’re hosting a panel to argue about the security of personal agents, where we are and where we’re heading.

It’s going to be a fun one! If you’re part of the Manchester MLOps community and would like to bag a seat, make sure you get your ticket!

We’re hosting a “build your own agent” Hackathon for female undergrads

“Build Your Agent” is a free, in-person hackathon run by Fuzzy Labs for female undergraduates who want to understand what building AI looks like in practice. The challenge for the day is to build a personal AI agent from scratch.

Date: 12th June,

Location: Manchester, DiSH

If you would like to take part or be a mentor for the event, reach out to Rhiannon or Max!

About Fuzzy Labs

We’re Fuzzy Labs. A Manchester-rooted open-source MLOps consultancy, founded in 2019.

We’ve got a few open roles as we build our team in Manchester… if we’ve caught your attention, why not apply?

Currently hiring:

Public Sector Lead: National Security Sector

Want to share the sauce? Share and subscribe to receive MLOps.WTF episodes straight to your inbox! You can also give us a follow on LinkedIn to be part of the wider Fuzzy community.

References

¹ How We Analyzed the COMPAS Recidivism Algorithm — ProPublica

² https://www.if.org.uk/2020/09/03/ofquals-algorithm/

³ Holistic Evaluation of Language Models (HELM)

⁴ Monitoring, evaluating, and why you really gotta catch ‘em all!

⁵ Breaking Down Bias: On The Limits of Generalizable Pruning Strategies